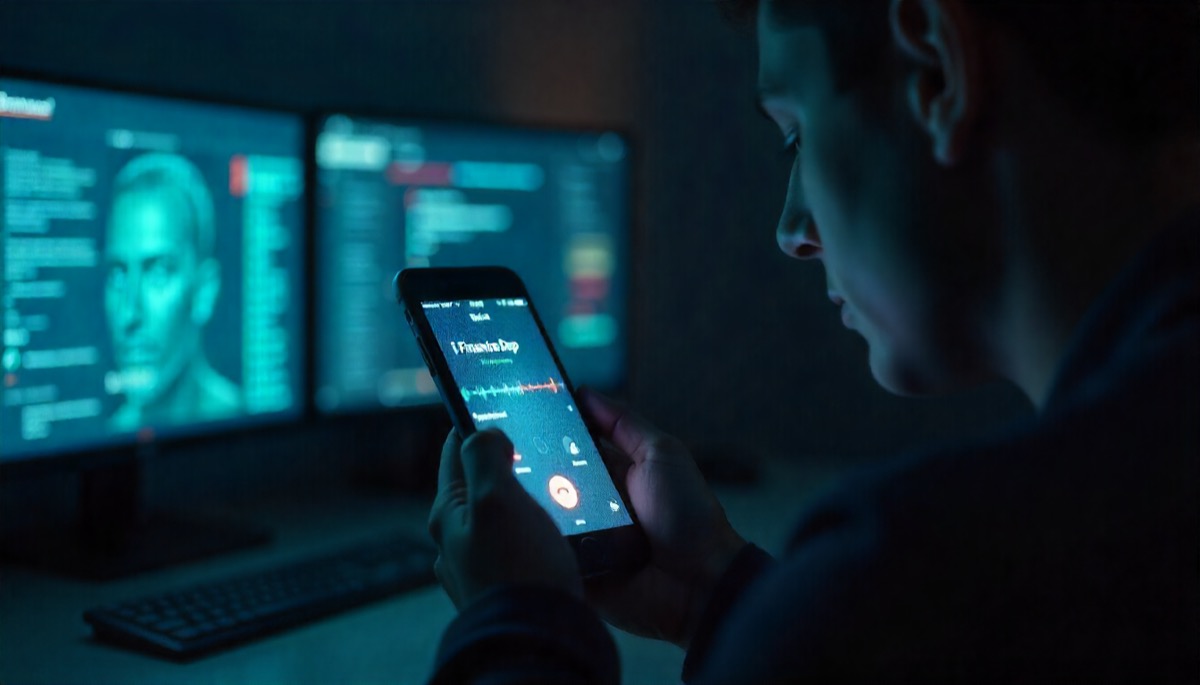

Phones used to carry a built-in assumption: a familiar voice meant a familiar person. That quiet rule is breaking. Voice cloning, real-time video manipulation, and scripted scam playbooks now sit inside cheap tools instead of secret labs. The result is a slow erosion of one of the last analog signals of trust: how a call sounds in the first seconds.

The same internet that delivers entertainment, from streaming series to a quick funky time game online session, delivers something darker: accessible AI models, leaked voice samples, open-source tools for face swaps, and step guides on social engineering. Scammers no longer need brilliant improvisation. Technology supplies the mimicry. Scripts supply the manipulation.

From crude fakes to precision fraud

Early deepfakes looked like broken masks: awkward lips, wrong timing, robotic sound. Now short samples from voicemail, podcasts, streams, or random clips are enough to build clones that survive casual listening. Add spoofed numbers and urgent scenarios, and many targets react before suspicion catches up.

Financial departments, parents, remote teams, charities and suppliers have all faced calls that mix real context with synthetic voices. The attack no longer relies on obvious grammar mistakes or foreign call centers. It relies on confidence, plausible details, and speed.

New-generation tactics that feel dangerously real

- cloned voices giving “updated” payment details to finance staff

- fake video calls with blurred background and small window to hide artifacts

- emergency family calls demanding instant transfers “before it is too late”

- impersonated executives pushing secret deals or urgent invoices

- support agents mimicking official hotlines to harvest codes and logins

The danger is not Hollywood-level perfection. The danger is “good enough” during stressed, sleepy, or rushed moments.

Psychological levers, not just technical tricks

Deepfake scams work because they hijack instincts. Recognition of a voice triggers care. Display of a known number triggers compliance. Words like “urgent,” “confidential,” “only a few minutes” narrow rational thinking. Attackers stack these signals. AI simply makes impersonation faster, cheaper and more scalable.

Trust fatigue grows with each incident. Once a few high profile cases circulate, entire groups begin to question even legitimate calls. That skepticism protects, but also slows real work and strains relationships. The social cost arrives quietly: more callbacks, more verification hoops, less spontaneous trust.

Defense needs rules, not vibes

Relying on “this sounds like a real friend” is no longer sustainable. Organizations and families need explicit verification protocols that assume voice and video can lie. Strong habits look paranoid for a week, then normal.

Verification habits that reduce deepfake risk

- require a second channel check for money, passwords or sensitive info

- set shared code phrases for emergencies inside families or teams

- train staff to pause, verify and involve another person before large transfers

- treat unknown links or new bank details as hostile until verified

- log all unusual urgent requests for review, even if executed

Such measures feel inconvenient compared to blind trust. But blind trust is exactly what modern scams monetize.

Tech safeguards trying to catch up

On the defensive side, research races to build detection: artifacts in audio, frame inconsistencies, watermarking, cryptographic signatures for verified media. Platforms explore “verified caller” frameworks where identity links to hardware, certificates or enterprise accounts.

None of this is perfect or universal yet. Attackers iterate as quickly as defenders. Detection tools risk false positives or become obsolete against new models. Regulation lags behind technology, and cross-border enforcement remains fragile. For now, procedural discipline often matters more than futuristic scanners.

Everyday users as last line of defense

Most calls never pass through enterprise-grade tools. Ordinary users remain targets with limited protection. Practical awareness helps more than fear. A cautious response pattern can be simple: slow down, ask follow-up questions, confirm via known contacts, never share one-time codes on calls initiated by unknown parties.

Personal red flags that should trigger doubt

- pressure to act within minutes with no written confirmation

- requests to bypass normal payment channels or approval chains

- refusal to switch to an established, verified contact method

- emotional manipulation focused on guilt, fear or secrecy

- technical instructions that grant remote access or expose 2FA codes

Training communities, colleagues and relatives to recognize these patterns works better than any isolated warning email.

How close to zero trust on calls

A full collapse of trust is not inevitable, but passive hope is not a strategy. The trajectory points toward a world where source verification, not surface familiarity, decides credibility. Voice alone will not be enough. Number alone will not be enough. Even video alone may not be enough.

The healthiest mindset treats calls as one puzzle piece. Authenticity comes from consistency across channels, stable procedures, and checks that do not depend on emotion or audio quality. In that world, deepfake scams lose their main weapon: the assumption that a familiar sound equals a safe request.

The technology will keep improving. So can verification culture. The future of trusted communication belongs not to those with the most realistic fakes, but to those who build systems where realism is never the only proof.